The Paradox of Prevention: How Automation‑Driven Reliability Is Making Industry More Fragile

Continuing the Series: Finding the Optimal Balance for Industrial Resilience in a Short-Tenure Workforce

Add bookmark

We are not automating ourselves into inefficiency. We are automating ourselves into irrelevance.

By chasing marginal gains in routine reliability, we have traded away the mechanisms that protect an organization from the

unknown: human resilience and ingenuity.

We are increasing systemic fragility at the very moment when human judgment and organizational adaptability are most needed. We have optimized for a flawless Tuesday, yet we are bankrupting the expertise required for a catastrophic Friday.

The "Dark Factory" isn't the future of Industry 5.0; it is the final, most expensive mistake of Industry 4.0. This obsession with "lights out" production ignores a devastating, generation-scale shift in the workforce:

- The old problem was atrophy: Experts losing skills they once had

- The new problem is absence: An entire generation never develops them.

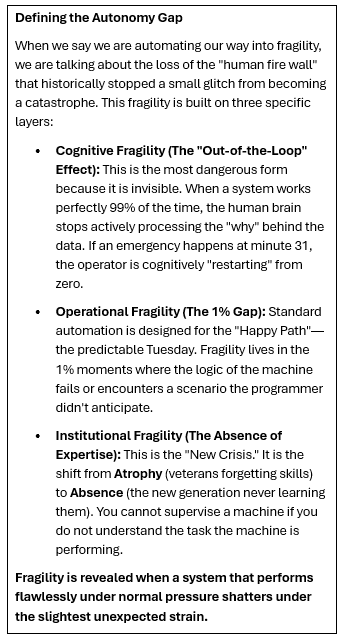

From Atrophy to Absence: The Autonomy Gap

For veteran operators, automation eroded skills they once mastered.

For new entrants, it prevents expertise from forming in the first place. By stripping the "why" out of work, we have created an Autonomy Gap. Systems run smoothly, but the humans inside them cannot explain, challenge or recover them. When the 1% catastrophe hits, there is no one left who knows what to do.

Don Glaser, founder of Simulation Solutions, who has spent decades training thousands of operators, states plainly:

"The new generation doesn't have the same baseline. They didn't grow up tinkering, troubleshooting, or building a mental map of how systems behave. They respond to screens; they don't yet read the process."

We optimized safety and reliability and unfortunately often removed the conditions for learning. This created the Autonomy Gap, a fundamental breakdown in the human-machine loop:

- The machine executes

- The human observes

- No one understands

The Eulogy of the Operator

I saw the first signs of this Efficiency Trap while visiting a Shell refinery in 2015. I call it the "Eulogy of the Operator."

I watched a veteran operator peel a yellow sticky note from his control panel to remind him of a critical recovery sequence. He used to run the system through deep process intuition; now, he was reduced to waiting for alarms. When one triggered, he followed handwritten instructions for a task he once performed instinctively. His notes were not a convenience; they were evidence that the operator had been demoted from a pilot to a passenger. His title had been changed to "Optimizer." It sounded like a promotion, but in reality, it was a eulogy for his agency. This was my lead indicator of the Baseline Shift; a systemic trend where the move toward total automation doesn't just simplify work, it actively erodes the mental map required to perform it. Monitoring, it turns out, is a terrible way to stay sharp.

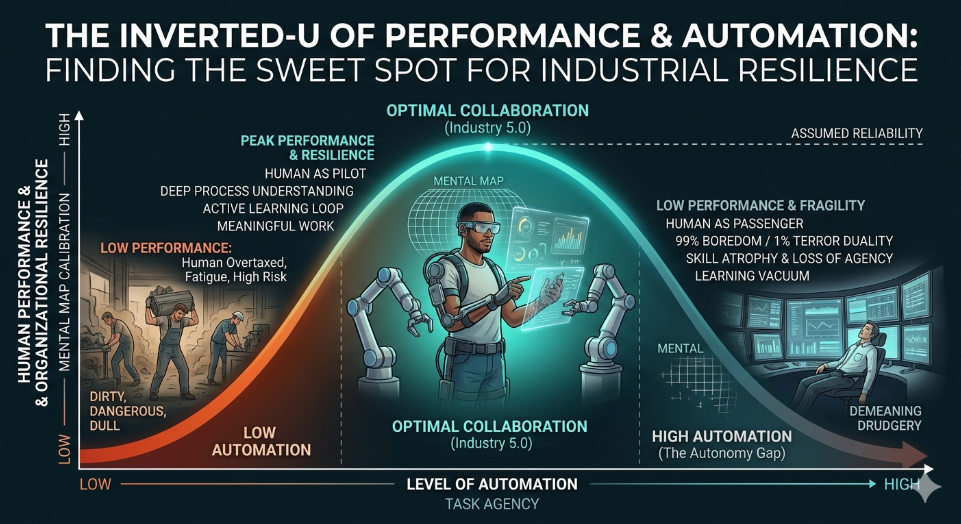

The Science of Boredom: The Inverted-U

We often assume that more automation always equals better results. The reality follows a well-established principle of human performance known as the Yerkes-Dodson Law. This inverted-U curve reveals that human performance peaks at a moderate level of challenge and stimulation.

When automation is too low, workers are overwhelmed by the dull, dirty and dangerous. But as we push to the far right of the curve (total automation) we enter the realm of the demeaning. Performance drops off a cliff because the human mind requires a baseline of challenge to remain alert and engaged.

The Core Principle When we automate to eliminate the dull, dirty, and dangerous, we often accidentally create the demeaning. This pushes workers off the performance cliff into a Learning Vacuum where they lack the mental map to intervene during the 1% catastrophe.

Join reliability, maintenance, and digital leaders at The Intelligent Asset Management in Energy Summit, focused on AI‑enabled reliability, predictive analytics and scalable asset performance.

Paradox of Prevention

The paradox of prevention is this: the more we automate, the more we depend on human judgment. Automation keeps systems running by smoothing over small problems. But those small problems are often the first signs of deeper failure, signs a human would catch. So the system appears stable, even as risk quietly builds, right up to the point where it breaks.

The True Measure of Progress: The Resilience Trade-off

Automation follows a predictable, deceptive pattern: it builds a fortress of reliability while simultaneously hollowing out the foundation of resilience. To understand why systems fail in crisis, we must differentiate between two distinct types of protection:

- Routine Reliability: The ability to do the same thing a million times perfectly ("Flawless Tuesday"). This rises with automation.

- Resilient Ingenuity: The ability to successfully navigate the one event that has never happened before ("Catastrophic Friday"). This plummets as the human is pushed out of the loop.

This divergence is the core of our modern fragility. As systems grow more complex and automated, the human's role as the final prevention layer becomes more critical, not less. True progress isn't automating the human out; it is automating the environment so the human can operate at a higher level of complexity.

The Irony We Engineered

In 1983, Lisanne Bainbridge described the Ironies of Automation: the more reliable a system becomes, the less prepared humans are to intervene when it fails. We automated "thinking" because we believed human judgment was inadequate, yet we ask those same humans to verify the machine's decisions. The very task we deemed them unable to do.

The paradox of prevention is this: The more automated and complex our systems become, the more crucial it is to have a human in the role of the final prevention layer. Automation doesn't merely fail to warn; it actively conceals. It masks degradation by compensating for variables, hiding trends until they are beyond recovery. The system doesn't just fail to warn; it actively camouflages its own fragility.

Critics often point to aviation or nuclear power as evidence that high automation can coexist with high performance. However, these sectors are the exceptions that prove the rule. They do not achieve resilience because of automation; they achieve it by spending millions to combat the very Learning Vacuum that automation creates through non-negotiable, simulator-based recertification. For the average industrial plant or mill, where such high-fidelity training is often the first budget cut, the Vacuum isn't a theory; it is the default state of the operation.

The Silent Crisis: The Learning Vacuum

Leaders assume expertise is a natural byproduct of time on the job. That assumption is dead. We are currently fighting a three-front war against human cognition and we are losing.

- The Forgetting Curve: Historically, we fought the Ebbinghaus Forgetting Curve, where without active reinforcement, people lose 90% of what they learn in less than two days

- The Atrophy Curve: As automation took over, we fought Skill Atrophy, where veteran operators slowly lost the "edge" of skills they once mastered through lack of use

- The "Never-Getting" Floor: Today, we face a new, more terminal front. Our current designs ensure that a new generation of operators never acquires the deep, tacit understanding of system behavior in the first place. There is no curve to climb; they are trapped on a flat line of "how-to" compliance. This is the birth of the Learning Vacuum: a measurable cognitive gap between following pre-set procedures and possessing the system-level expertise required to intervene when the unexpected happens.

We have moved from a workforce that forgets to a workforce that never knows. This crisis is accelerated by the Short-Tenure Workforce. As 20-year career paths are replaced by 3-5 year rotational roles, the natural transfer of institutional memory is broken. We can no longer rely on 'years of exposure' to build the deep expertise that once served as our primary safety layer. We taught people what to do and assumed they would learn the why through years of experience. While high-reliability industries like Aviation and Nuclear Power combat this vacuum through massive, non-negotiable investments in simulator-based recertification, the average industrial plant has historically offloaded this cost to "on-the-job" training that no longer exists.

When you restrict a worker's focus to isolated, automated steps, the "how-to," without the "why," everyone suffers. We are essentially sending passengers to do the work of pilots, and they will experience the duality of 99% boredom and 1% terror. Without a mental map of the system's logic, workers are no longer a safeguard; they are spectators waiting for a disaster they lack the baseline expertise to even recognize.

Beyond the Process: A Crisis of Meaning

Organizations must fundamentally rethink orientation and training. We can no longer rely on "time in the seat" to fill the gaps. We must pivot to new training methods that deliberately provide the "why" and the tacit knowledge that automation has stripped away. If your training doesn't bridge the Autonomy Gap from day one, you aren't building a workforce; you are managing a learning vacuum.

This is the hidden tax of our current strategy. It is not just a safety risk; it is a crisis of meaning.

Historically, we used technology to eliminate the dull, dirty, and dangerous. But in our rush for "foolproof" systems, we have introduced a fourth, more corrosive "D": demeaning. When we strip the challenge out of a role, we strip the meaning out of the person. People get bored when they are not challenged, and disengagement is the natural response to a system that no longer requires or respects human agency. People are leaving not because they want more pay, but because we have hollowed out their judgment and replaced it with a script. We have brought drudgery back to the frontline, not through physical labor, but through mental irrelevance.

Three Non-Negotiables for Leaders

- Never automate the 99% at the cost of the 1%. The rare event is the only one that matters for resilience. If your system cannot be recovered by the people inside it when the automation fails, it is fragile by design

- The human is the fail-safe, or there is none. Authority without expertise is theater. If the operator cannot act independently of the system, they are not a safeguard; they are a spectator

- Design for learning, not just output. Every system either builds human capability or extracts it. If your people do not leave the work smarter than they started, the system is actively accumulating risk.

Three Non-Negotiables for Leaders

- Never automate the 99% at the cost of the 1%. The rare event is the only one that matters for resilience. If your system cannot be recovered by the people inside it when the automation fails, it is fragile by design. The validation of this principle lies in "Intervention Competence": the measurable ability of a practitioner to successfully navigate a "cold-start" recovery without digital assistance.

- The human is the fail-safe, or there is none. Authority without expertise is theater. If the operator cannot act independently of the system, they are not a safeguard; they are a spectator. The true test of a system's fragility is the confidence of the people running it. We measure resilience by a practitioner's ability to take control during a crisis and knowing exactly why they are acting

- Design for learning, not just output. Every system either builds human capability or extracts it. If your people do not leave the work smarter than they started, the system is actively accumulating risk. Success is verified when the "Learning Vacuum" begins to close, evidenced by a workforce that can explain the underlying logic of the process, rather than just reciting the steps of a procedure.

The Litmus Test

Before your next automation investment, ask:

- Does this system make people more capable or merely less necessary?

- When the automation fails, will my team know what to do or wait for instructions that are no longer coming?

Remember the GPS. It makes you a better driver until the signal drops. That is when you realize you never learned the roads. Will your organization be brittle or resilient when the screens go dark?

Looking for more expert takes? Read Brent's full collection of industry insights here.

Coming Next: The Engine of the Tragedy

We have shown you the curve: how automation helps until it hollows out the human capability it depends on. But the Inverted U doesn't happen by accident. Something is pushing organizations past the tipping point and it has a name.

It is called Digital Taylorism.

This is the management logic that treats every human task as a problem to be automated, every judgment calls as a process to be standardized and every worker as a variable to be controlled. It is the invisible design choice embedded in how we build systems, how we staff them and how we measure their success.

Next time, we pull the engine apart to see why leaders keep walking into the trap; and how we can finally start designing for competence instead of just compliance.

Bibliography

Bainbridge, L. (1983). Ironies of Automation. Automatica, 19(6), 775–779. (The foundational text on why reliable systems make humans less prepared for failure).

Ebbinghaus, H. (1885). Memory: A Contribution to Experimental Psychology. (The origin of the Forgetting Curve and the need for active reinforcement).

Hollnagel, E. (2014). Safety-I and Safety-II: The Past and Future of Safety Management. Ashgate Publishing. (Context for the "Resilient Ingenuity" versus "Routine Reliability" trade-off).

Reason, J. (1997). Managing the Risks of Organizational Accidents. Ashgate Publishing. (The definitive guide to the "Prevention Layer" and systemic defenses).

Yerkes, R. M., & Dodson, J. D. (1908). The Relation of Strength of Stimulus to Rapidity of Habit-Formation. Journal of Comparative Neurology and Psychology. (The scientific basis for the Inverted-U Performance Curve).