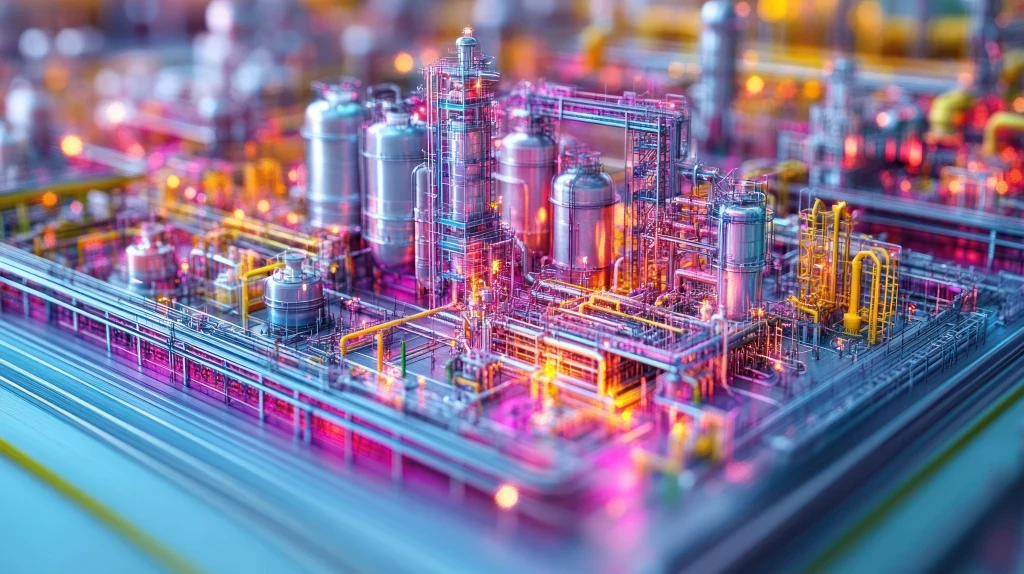

I've always been fascinated about the relationship between what we call reality and the representation of it that lives within our computers in the form of pure digital information. Sometimes we use digital tools to "alter" reality, to design and build something digitally that will, some day, form part of the real world.

Sometimes we use computers to capture reality's features and create a model for later study, safe simulation and to analyze "what if" scenarios. In these two unidirectional processes there's always some kind of gap: assets that do not perfectly match the initial design, or data models that do not capture the full picture.

As fun as getting sucked into this digital metaphysics might be, looking for practical methods to narrow this gap is key to obtaining results in the form of precision, predictability and knowledge. These methods should:

1) Help to reduce the individual gap between each one of these processes.

2) Provide a feedback loop to further reduce the overall gap present in each iteration.

Mind the gap!

For instance, in my previous post, we examined the evolution of the technologies we use to design and model facilities, as well as for the construction of an ecosystem of documentation and information.

PDMS representations of these facilities are created from this information to procure functional and navigable views of the plant. Design documents and drawings featuring the as-built designation should match up with the final constructed result. We know that in both cases there are still differences in construction, details that remain uncaptured and obstacles still to be surmounted..

Technology has evolved over the years to provide us with methods to reduce the gap. For instance, CAD/CAM technologies allow us to have elements that are being manufactured, "brought-into-reality", exactly as the digital model specifies. Sure, we still can't dream of gigantic robots building a gas plant for us, but discrete elements and equipment is built in this way today.

I have also been introduced to several 3D laser scanning technologies, of late, which allow facility owners to laser scan their assets and obtain an accurate 3D model of it in return. They play a crucial role when it comes to creating an accurate model to relate information to, especially during the operational phase of the plant.

A loop is a circle, an endless iteration.

As a document management specialist and software developer, I've been pretty focused on the first stages of a plant's lifecycle: in other words, the EPC infancy of a facility. During the operational phase, capturing accurate information about the actual status, form and elements of the facility is absolutely critical.

This will allow us to generate an up-to-date "universe" of documentation and information that can be correctly related to the actual elements of the facility, as well as being able to inject feedback to the information systems whenever a corrective action must be undertaken.

This is why we are investing heavily not only on our own state of the art document management systems for every stage of a facility’s lifespan, but also on being able to integrate it with the manufacturers of software providing this type of information capture and insights analysis.

From conversations with customers, I firmly believe that providing them with this feedback loop in as an accessible and easy and frictionless form as possible, is key to facility owners. I believe in the idea of narrowing that chasm between what we have designed and what we have built, between how things actually are and what we believe them to be . By completing this process time and time again, like the Greek ouroboros, perpetually eating its own tail, we can continually improve the economic and HSE aspects of the environments we work in.

Big data means consciousness

There should be no wonder why big data is playing such a role in the oil and gas industry. No one is better prepared to understand the benefits of this technology than those whose business is to extract massive amounts of natural, unprocessed resources and refine and transform them into derivate products, ready for consumption.

As in our own cognitive processes, big data technology extracts and unifies unfathomable amounts of data and processes it, combines it, analyses it, transforms it and rarifies it to the point of producing usable knowledge.

Now, think of the plethora of data that we are capturing every second in an average facility, from equipment sensors and metering, to the current methods we have to monitor human activity and vital signs. Every byte of it is meaningful when injected into the loop we've described today.

Josemaria Sotomayor, Senior Systems Engineer, Enterprise Content Division, EMC