[INTERVIEW] Translating The Digital Oilfield Using Natural Language Generation

Add bookmarkHow can cutting-edge software translate dashboard lights and real-time 1s and 0s into reports that turn three hours of an engineer’s time into 60 seconds and could give your asset 40 hours extra up time a month? Find out here as we speak to Arria NLG, specialists in natural language generation.

Speaker key

TH Tim Haðdar, Editor In Chief, Oil & Gas IQ

JB John Bell, SVP Business Development, Arria NLG

TH Hello and welcome. This is Tim Haidar and today I’m going to be speaking with John Bell who is the SVP for Business Development at Arria NLG Plc. John’s going to be speaking ahead of the Digital Oil Fields conference that we’ve got coming up later on this year. John, thanks very much for joining us today.

JB Yes thanks, my pleasure.

TH First of all, NLG, what does the acronym stand for and can you give us a succinct and layman’s term description of what it means?

JB Sure. So NLG, straightforward enough, it stands for Natural Language Generation and in terms of what it does, it’s the conversion of raw data - data that’s generated from computers - taking that data and turning it into text. And then that text is output as natural language as if it were the spoken word.

TH Can you give us an example of how this would work?

JB Yes. So a fairly simple example would be using data that’s monitored from a particular asset in an oil field - like a compressor or a pump - and as that data is monitored in real time it usually just fires off things like vibration rate or pressure or temperature, fairly simple measures. Those come through 24/7 and usually end up in a dashboard and that’s fine, as long as somebody is looking at the dashboard at the time. But what we’ve established is we can take that data and turn it into meaningful language that an engineer might have created themselves and we can fashion a process around that.

So rather than having a static dashboard, you can have live language reporting to you via a whole variety of different media types but that language can come through and tell you exactly what the status of a particular asset is. It can also inject some predictive analytics into that language. It will say things like "the asset is at this stage now but if it continues on this path it may fail in 28 days." And that sort of language is much more meaningful to an engineer than just a static dashboard..

TH So it’s trying to put something into workable sentences as it whatever the target language might be, that would otherwise be reams of data that you would need to take a fine tooth comb to try and make any sense off and then interpret?

JB Yes. I think typically if you look at the workflow, if you look at what actually happens, an asset on a platform might fail and an engineer will get some kind of an alert, usually a flashing light somewhere. And then with a mixture of charts and tables and other media, the engineer will then have to analyse that data, he will then have to interpret it into what the condition is, what status is, and after that he or she will typically sit down and physically write out a report - whether it be an email or a formal incident report. And this we’ve discovered can take absolutely hours. You’re talking three to five hours for an incident to end up as a written report. With NLG, because it’s just automating that data collection, it’s automating the interpretation and analyses of that data and then generating that physical report all under a minute, so it’s just taking away that laborious tasking from an engineer of the data gathering. Because the type of incidents we’re looking at are quite repetitive, we can actually collect that knowledge and using that knowledge we can generate a report as though an engineer had written it himself.

TH Interesting. So aside from maybe safety incidents, where else can NLG apply to the oil and gas industry?

JB Well certainly right across the asset arena, so whether it’s well integrity or asset integrity or real-time drilling, anything that you’re working with machine code or machine output from sensors. This is just a faster and more consistent way of reporting than having different interpretations from the raw data. And it is adding that virtual engineer into your workforce.

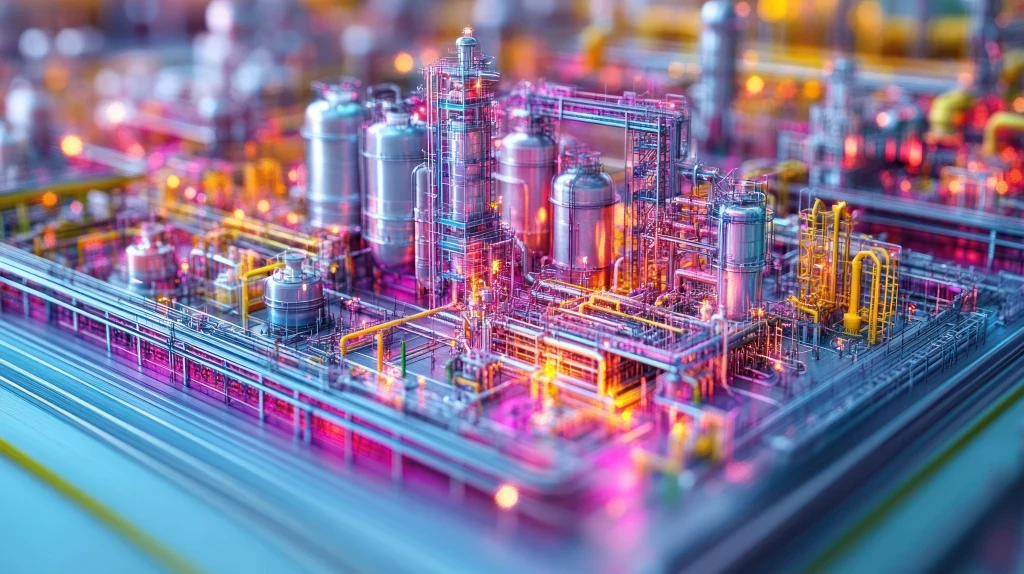

For so many years we’ve heard about an ageing workforce and how hard it will be to replace all of that lost knowledge. This could be a method that people might look at in terms of creating that virtual engineer which is doing some of the laborious tasks and leaving the engineer to really focus on what’s important: the remedy to the event, the remedy to the failure. So you can use that right across upstream and downstream, certainly in subsea integrity right through to refinery, anything really that’s got machines that have sensors is where it can be applied in the engineering sense.

It can then be used in all kinds of data mining for marketing. It can be used in data mining for certain contractual issues, like EPC contracts where you have certain contracts based on deliverables. You can automate the search that those deliverables have been made, you can automate the type of report that you might generate to warn a vendor, to warn a subcontractor that they’re outside of contract terms. And again, this is just really mining through masses and masses of data and turning it into what we all use: language. It’s been the best form of communication since the dawn of man.

TH So that all makes good sense, but a lot of people that are going to be listening to this will never have heard the like before. This is an oil and gas industry where prices are falling and margins are tightening. If I want to take something like this and introduce it into my systems on a 30 year old asset, you best show me some tangible ROI. Do you have any examples of this?

[inlinead]

JB Well, some companies are far more efficient than others in getting that incident report out and being consistent with it. But we struggle to see anybody doing it in under two hours. The norm, from what we’ve observed, is closer to three and four hours. We can reduce that down to one minute. The corollary of that is we get a remedy in an equal time saving, so taking two to three hours out of an incident to remedy and working on ten to 20 incidents per month, you can very rapidly see that if you can transfer that saving in data gathering and reporting, you could be saving 40 hours a month quite easily. And that’s 40 hours a month added production time.

Depending on what your barrels per hour rate is, then you can quickly see that 40 hours could be a very, very significant sum and far and away paying for the investment in NLG easily within six months.

TH Now, you mention the production capacity and what 40 hours extra uptime could do for the bottom line. Which areas do you think, aside everything asset, do you think could benefit the most from this kind of digital oil field technology implementation? Refining springs instantly to mind.

JB Yes, I think with refining, you have to be running at an efficiency rate in excess of 95% to really make any serious money, to make any profit at all in some cases so any downtime, whatever the cause, immediately hits the bottom line. So NLG can help, whatever that workflow process is, to identify where you have logjams in the systems, logjams in your current processes. So it could be that you’re using process models and reporting that in natural language to quickly identify whether the process model is going to work, whether there are other alternatives and then to run those processes in accordance with what NLG is telling you to do.

Very often you might write a process model which you have to test repeatedly before you get the result you’re looking for, whereas NLG can help you identify that much earlier because it’s doing that interrogation of how the process is working. Certainly, when you’re changing the process because of the feedstock you may have received, NLG could help you with a fairly early warning in terms of what processes you have to change based on the new feedstock you’ve got.

So right throughout the supply chain from finding oil to drilling oil to extracting oil to transporting it through to refining it, if you think of automated reports where usually a specialist would have to intervene, whether it be a supply chain specialist, a legal specialist, a driller, a refiner, whatever their specialty is, any task that is intensive on data acquisition, intensive in evaluation of that data, interpretation and analyses, NLG can automate that and coming out with exactly what that specialist might produce as a report.

TH You touched on the Great Crew Change earlier, how could NLG help with knowledge retention?

JB NLG learns from the engineer or the specialist in their work activity, learns from that work activity and encompasses that in the software as its knowledge base. It’s a knowledge creation system. How are companies really trying to capture that information they may lose in the attrition of retirement? Just databases alone and bigger and bigger data isn’t really answering the knowledge question. When expert systems such as NLG can fill some of that gap, can fill some significant parts of that gap and allow the engineers to do what they really are supposed to be doing, that’s where you’re going to get the greatest kind of knowledge retention and the greatest ROI.

TH What would your advice for any company that’s starting out on the road towards digital oil field integration or the kind of implementation of technology that you’re talking about?

JB Digital oil fields have matured. It’s been a topic I would think for at least 20 years. I can remember the first one that I was involved with about 16 years ago and that wasn’t the first in the industry by a long shot.

Having worked in this area for a while now, I think the keys are really to have a clear vision, to measure yourself at all times with what you’re doing to make sure you’re on track to meet the objectives you set out to achieve and to not be fearful of change and embrace new innovation.

But I think when you go into it, have a clear focus of what you want it to achieve, always ask the question every day, why are we doing this? Why are we investing so heavily, what is our expected outcome? And then be able to measure how you’re progressing to that expected outcome. And if you’re not meeting what you expected, then don’t be frightened to make changes and be innovative.

Years ago we spent so much time finding out how we could merge data, how we could get data to communicate with each other. I think that’s cracked. We’ve cracked that code. I think merging data is fairly straightforward these days with message buses and web services and all types of open application programming interfaces (APIs). So that’s no longer a logjam.

Where I’m seeing the biggest roadblock is now people are going almost dashboard and screen dizzy with the amount of data they’re having to consume because it’s all in a very highly visual presentation and yet when somebody comes to explain what they’ve seen visually, they turn back to language. NLG can’t do everything, but I think it’s a very, very significant addition to the analytics that people are putting into digital oil fields. I think it’s just another layer of sensible communication of that information and this time it’s using the most common form of communication of information which is language.

TH Great. John, thanks so much for your time today. We appreciate that and we look forward to meeting you at the conference in a couple of months time.

JB I look forward to it too. Thanks very much. Thanks Tim.